6WINDGate 5.0 Architecture: Are You Ready For 5G?

This is the second blog post in our series that explains the benefits of our new 6WINDGate 5.0, which has been selected by the world’s largest OEMs to build 5G networking solutions. As described in the first blog, 6WINDGate implements a complete integration of its Fast Path with Linux to benefit from the latest Linux kernel improvements. As a summary, Linux running 6WINDGate is Linux. In this post, we will detail 6WINDGate 5.0 architecture and how this architecture makes 6WINDGate’s accelerated data plane transparent to Linux applications, which is critical for efficient 5G networking.

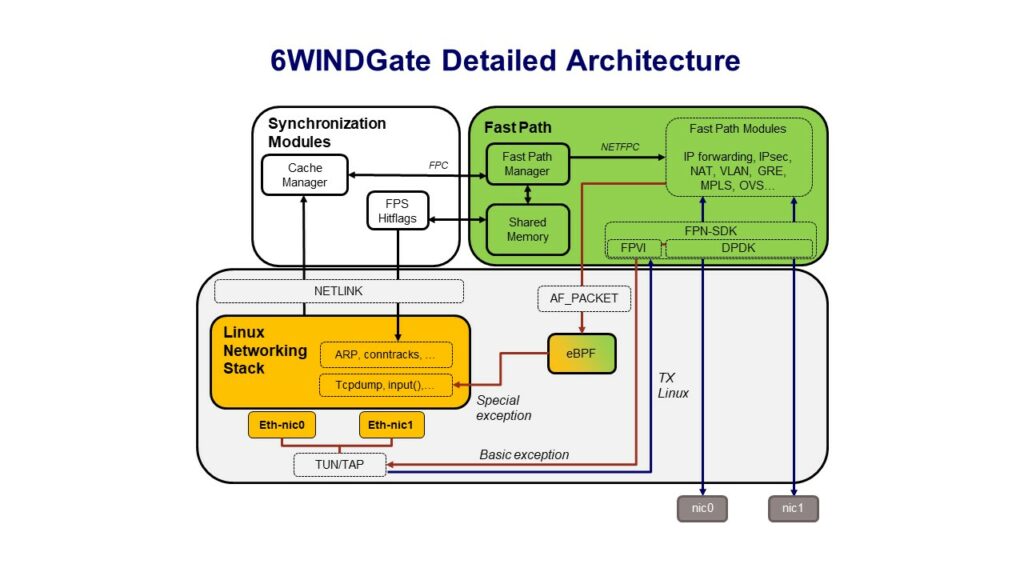

Fast Path and FPN-SDK

In the 6WINDGate architecture, all packets are received and transmitted by the Fast Path through DPDK. The 6WINDGate Fast Path Networking – SDK (FPN-SDK) provides an abstraction layer to the 6WINDGate Fast Path modules through the FPN API. The FPN-SDK is implementation-dependent; a specific FPN-SDK is required for a given implementation of the Fast Path for a processor environment (DPDK, processor SDK).

The 6WINDGate Fast Path modules (IP forwarding, IPsec, NAT, VLAN, GRE, MPLS, OVS…) process packets efficiently according to local information stored in the Shared Memory.

Exception Strategy and Continuous Synchronization

To achieve Fast Path transparency to Linux, 6WINDGate implements what we call “Linux – Fast Past synchronization”. It relies on two mechanisms: exception strategy and continuous synchronization.

When local information is missing in the Fast Path to process a packet, when a packet type is not supported by the Fast Path, or when a packet is destined to the local Control Plane, then it is diverted to the Linux Networking Stack. These packets are known as exception packets and this mechanism is called the exception strategy.

The Linux Networking Stack is responsible for processing packets that could not be processed at the Fast Path level. These packets will be either processed by the 6WINDGate Linux Networking Stack, or by the Control Plane. It is to be noted that, in most cases, this accounts only for a few percent of the traffic.

In the case of exception packets due to lack of information, the information learned in the Linux Networking Stack during the processing of the packet will be transparently synchronized into the Fast Path. This way, subsequent packets of the same flow will then be handled by the Fast Path. This is the mechanism of continuous synchronization.

Exceptions

Two kinds of exceptions are defined according to the process to be applied on the packet:

- The first type of exception is called “Basic Exception”. For this type of exception, the Fast Path can provide the original incoming packet to the Linux Networking Stack, where it is processed as incoming on a standard network interface.

For example, a Basic Exception is raised when the route lookup fails during simple IP forwarding.

- The second type of exception is called “Special Exception”. This type of exception is raised when the original packet cannot be restored and sent by the Fast Path to the Linux Networking Stack. The exception packet needs to be injected in a specific location in the Linux Networking Stack packet processing path.

The processing of Special Exceptions can rely on an eBPF program. The role of this program is to drive the packets to the right hook inside the Linux Networking Stack for further processing aligned with the work already done by the Fast Path. For example, if a GRE packet is processed by the Fast Path and the inner packet is intended for local delivery to a routing daemon, the inner packet is sent to the Linux GRE interface thanks to the eBPF redirect function. This way, the inner packet is received on the Linux GRE interface as expected by the routing daemon.

FPVI

The Fast Path Virtual Interface (FPVI) allows exchanging packets between the Fast Path and the Linux Networking Stack. The FPVI makes Fast Path ports appear as netdevices into the Linux Networking Stack.

The purpose of the FPVI is to:

- Provide a physical NIC representor in Linux for configuration, monitoring and traffic capture.

- Send packets from Linux to the Fast Path (locally generated traffic).

- Exchange exception packets between the Fast Path and Linux.

The FPVI is implemented in Linux using the TUN/TAP driver, and in the Fast Path through the FPN-SDK using the DPDK virtio-user PMD providing a virtual port to each TUN/TAP interface.

Packets to be sent locally by the Linux Networking Stack are directly injected in the outgoing flow to be processed by the Fast Path, using the TUN/TAP Linux driver.

The FPVI implements the exception strategy as follows:

- For Basic Exceptions, the FPVI implements a standard processing through the netif_rx function of the TUN/TAP Linux driver.

- For Special Exceptions, on the ingress path, packets are injected at the right place into the Linux Networking Stack thanks to an eBPF program, as explained in the next paragraph. On the egress path, packets are sent directly using the standard sendmsg() API.

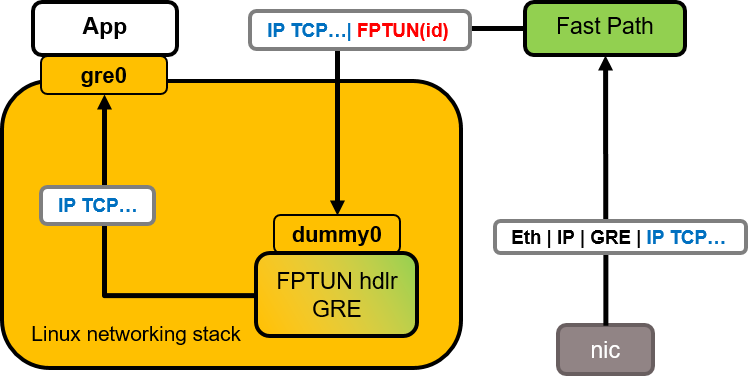

Special Exception with eBPF

The mechanism for Special Exception makes use of eBPF programs.

eBPF (extended Berkeley Packet Filter) is an extension of BPF that was initially designed for BSD systems to filter packets early in the kernel to avoid useless copies to userspace applications like “tcpdump”. Thanks to networking hooks like Traffic Control (TC) and eXpress Data Path (XDP), eBPF extends BPF to network filtering, for example to implement anti-DDoS, or to model hardware components.

An eBPF program is a C-like program using the uapi/linux/bpf.h Linux kernel API. A compiler translates this program into eBPF assembly instructions. This binary can then be loaded into the kernel, verified by the kernel to make sure there is no loop or non-authorized memory access, and finally executed.

In 6WINDGate the role of the Special Exception eBPF program is to drive the packets to the right hook inside the Linux Networking Stack for further processing aligned with the work already done by the Fast Path.

The Fast Path sends a Special Exception via a dummy interface to which the eBPF program is attached with TC. To specify which operation is needed on the packet, meta data is attached to the packet, by means of a specific trailer, called FPTUN. For example, the trailer is filled with the interface index to which the packet should be injected. Therefore, the eBPF program responsible for special exception processing is called the FPTUN handler.

The following figure is an example of GRE packets being processed by the Fast Path.The Inner TCP packet should be delivered to the application as coming from the Linux GRE interface.

The Fast Path receives the packet, decapsulates the GRE header and sees that the inner packet is intended for local delivery. It appends a FPTUN trailer with the index of Linux gre0 interface to the packet and sends it to the dummy0 interface. The eBPF FPTUN handler receives the packet, retrieves the FPTUN information using the bpf_skb_load_bytes() API, removes the trailer using the bpf_skb_change_tail() API, and finally the packet is redirected using the bpf_redirect() API to the ingress path of the gre0 interface.

The value of the interface index is known thanks to the Linux – Fast Path synchronization mechanism.

Synchronization

The Cache Manager is a userland software module that performs synchronization between the Linux Networking Stack and the Fast Path. It listens to the kernel updates (Netlink messages) done by the Control Plane (ARP and NDP entries, L3 routing tables, Security Associations…) and the Management Plane. The Cache Manager synchronizes the Fast Path with this information. Synchronization is made thanks to the FPC API. The Cache Manager sends messages including commands to the Fast Path Manager. Thanks to the Cache Manager, no change is required in the Control Plane and the Management Plane to be integrated with Fast Path modules.

The Fast Path Manager is a userland software module and can be considered as a Fast Path Linux driver. The Fast Path Manager receives command messages from the Cache Manager through the FPC API and analyses these commands to update information for Fast Path modules. The Fast Path sends acknowledgment messages (error management) to the Fast Path Manager using the FPC API.

The update of information by the Fast Path Manager for Fast Path modules can use two different mechanisms:

- The Fast Path Manager writes relevant information for the different Fast Path modules, for instance routing entries, ARP entries, security policies, security associations… in a Shared Memory,

- The Fast Path Manager uses NETFPC. NETFPC is the transport protocol used to communicate between a Fast Path module and its co-localized Fast Path via a network pseudo-interface. This protocol can be used when a notification must be directly sent to a Fast Path module.

Fast Path Statistics and Hitflags

The Fast Path Statistics module synchronizes the statistics of the Fast Path into the Linux Networking Stack. Without this synchronization, the system statistics would be inaccurate as the Linux Networking Stack is not aware of the traffic managed by the Fast Path.

For instance, an IKE deamon like StrongSwan can rely on up-to-date XFRM statistics, without any patch, even though all the IPsec traffic is being handled by the Fast Path.

Not all kernel statistics can be updated using a userspace API. In particular, at the time of writing there is no API to update the interface statistics or IP MIB. However, a library can be pre-loaded for applications using Netlink, like iproute2, net-snmp, bmon, so that Netlink requests for statistics can be updated transparently with the Fast Path statistics from the Shared Memory.

For other type of management applications, APIs are provided to collect both Linux and Fast Path statistics of interface and IP MIB.

Some kernel objects like ARP entries or conntracks follow a state machine that depends on the usage by the Linux Data Plane. As packets are processed by the Fast Path, Linux is not aware that these entries are used, and a mechanism is needed to prevent them from expiring.

This is the role of the Fast Path Hitflags mechanism. As a result, the state of kernel objects remains alive as long as the Fast Path is actively using them and the packet processing remains steady in the Fast Path.

Statistics / Hitflags are implemented through the following mechanisms:

- The Fast Path modules update the Shared Memory with statistics / Hitflags,

- The FPS daemon reads the Shared Memory statistics,

- The Hitflags daemon reads the Shared Memory entries, collects the entries marked with the hitflags and resets the flag,

- The FPS / Hitflags deamons update the statistics / kernel states, using NETLINK interface.

6WINDGate 5.0 customers have already selected our new architecture to build their 5G networking solutions. Stay tuned for the next 6WINDGate 5.0 blog in this series when we will share more about our new Container support. In the meantime, please Contact Us for any questions.

Click here for further reading on 6WINDGate 5.0’s architecture.