It can seamlessly accommodate the following environments:

- Bare Metal

- KVM through libvirt

- Proxmox

- OpenStack

- VMware

- Amazon Web Services

While virtualized environments provide tools to deploy 6WIND vRouter images very efficiently, things get more complex with a Bare Metal environment.

The one-shot approach is installing 6WIND vRouter on a USB stick and transferring the software onto the device’s internal storage.

This is easy for a quick evaluation but it has several drawbacks:

- Physical access to the server is required

- USB access must be allowed

- Does not scale when you need to install several servers

For this example, we’ll use PXE for day-0 remote deployment, Cloud-Init for day-1 initial remote access and Ansible for day-2 further configuration.

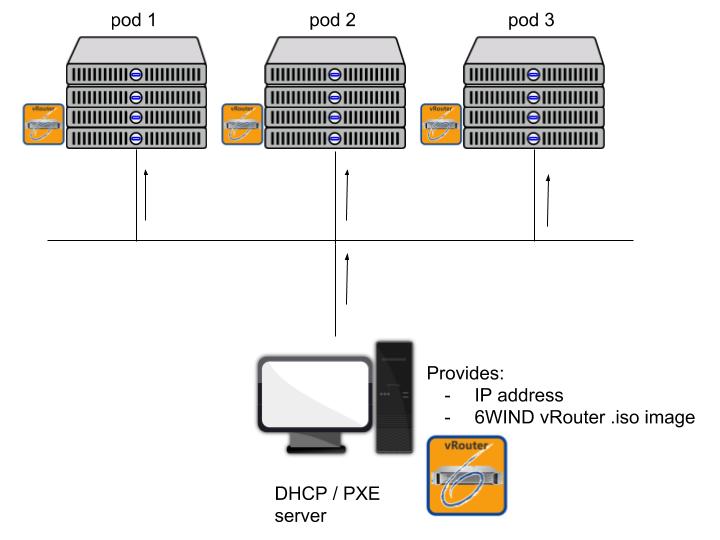

PXE Deployment

Pods 1, 2, 3 are collections of hosts with different hardware capabilities (CPU, RAM, NICs etc).

DHCP / PXE server will distribute the same 6WIND vRouter image to hosts in pod 1, pod 2 and pod 3.

The following grub configuration will be delivered through PXE to let the hosts boot the vRouter software:

menuentry 'Virtual Border Router network installer' {

set root='(pxe)'

set kernel_image="/vmlinuz"

set ramdisk="https://www.6wind.com/initrd.img"

(...)

set boot_opts="$boot_opts fetch=http://$pxe_default_server/vrouter.iso ds=nocloud-net;s=http://$pxe_default_server/cloud-init/"

linux $kernel_image $boot_opts

initrd $ramdisk

}Two items are important in this configuration file:

- fetch: the URL where the vRouter iso will be downloaded. $pxe_default_server is automatically replaced by the IP address of the PXE server

- ds=nocloud-net;s: the URL where the vRouter will find its cloud-init configuration. $pxe_default_server is automatically replaced by the IP address of the PXE server

IP addresses are allocated by the DHCP server thanks to the following configuration file (we use dnsmasq):

(...) # DHCP configuration dhcp-range=192.168.235.10,192.168.235.150,12h dhcp-host=14:18:77:66:c7:23,host1,192.168.235.13 dhcp-host=52:54:00:12:34:57,host2,192.168.235.36 dhcp-boot=boot/grub/i386-pc/core.0 # TFTP configuration enable-tftp tftp-root=/var/lib/tftpboot

host1 and host2 are identified according to their MAC address, the IP addresses are static and will be used to distinguish them for provisioning and configuration.

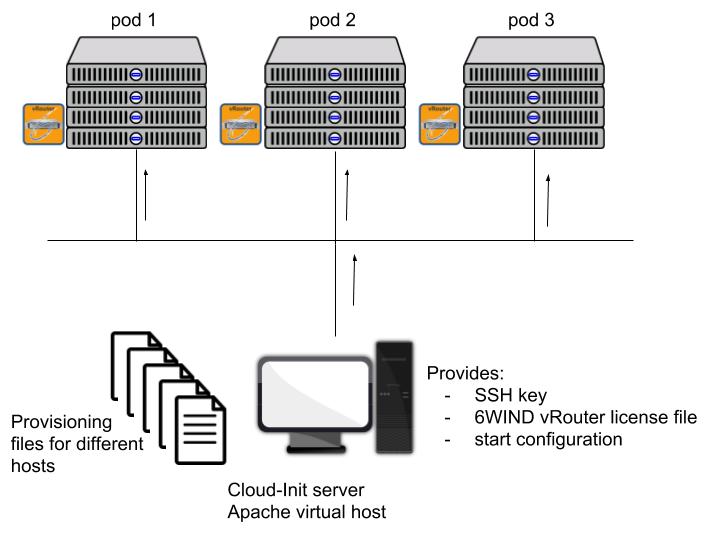

Cloud-Init Provisioning

The Cloud-init server will provide different configurations to the different hosts. Using Apache virtual host server, the content will be different according to the different hosts’ IP addresses.

For example, the configuration file for host1 will be the following:

# cat $HTTP_DIR/192.168.235.13/meta-data

instance-id: host1

local-hostname: host1

EOF

# cat $HTTP_DIR/192.168.235.13/user-data

#cloud-config

ssh_authorized_keys:

- ecdsa-sha2-nistp256 AAAAE2VjZHNhLXNoYTItbmlzdHAyNTYAAAAIbmlzdHAyNTYAAABBBI6YcZ4KhPAFIV2C9S/6g9P7e7pemCfZRUl3Mo0xwMkTay5OTD5uS9IKaoOgUNJvMnu7yM380mxHDWPnqatYFEo= root@pxeserver

runcmd:

- '/usr/bin/wget "http://192.168.235.1/cloud-init/vrouter.startup" -O /etc/sysrepo/data/vrouter.startup'

- '/usr/bin/install.sh -r -d /dev/sda'

- '/sbin/reboot'

EOF

# cat $HTTP_DIR/192.168.235.13/vrouter.startup

{

"vrouter:config": {

"vrouter-system:system": {

"hostname": "host1"

},

...

}After the Cloud-Init phase host1 will be provisioned with:

- 6WIND vRouter installed on a physical drive

- ssh key

- start configuration

All hosts will be provisioned individually.

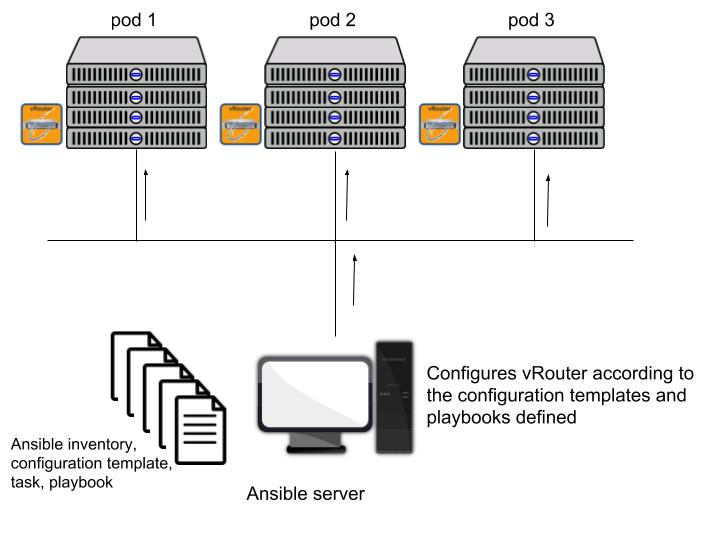

Ansible Configuration

Ansible is used to remotely configure the different vRouters.

Ansible can use different APIs:

- ssh

- NETCONF

6WIND vRouter is compatible with both methods but in the following example, we’ll use the NETCONF API to directly interact with 6WIND vRouter NETCONF/YANG management.

First, you’ll need to define your host inventory:

# cat inventory/hosts [Pod_1] host1 ansible_ssh_host=192.168.235.13 ansible_connection=netconf ansible_user=admin ansible_ssh_pass=******** ansible_network_os=vrouter

Then define a configuration template:

# cat playbooks/roles/Pod_1/host1/templates/hostname_config.xml <config xmlns="urn:ietf:params:xml:ns:netconf:base:1.0"> <config xmlns="urn:6wind:vrouter"> <system xmlns="urn:6wind:vrouter/system"> <hostname>host1</hostname> </system> </config> </config>

This configuration template is using the YANG format specific to 6WIND vRouter and will be sent over NETCONF connection.

The task applying this configuration through netconf_config is defined as follows:

# cat playbooks/roles/Datacenter_A/host1/tasks/hostname_config.yaml

---

- name: Set hostname server in the device

netconf_config:

content: "{{ lookup('file', '../templates/hostname_config.xml') }}"And finally, the playbook for executing this task on host1 is

# cat playbooks/playbook.yaml --- - name: Sample playbook hosts: host1 tasks: - import_tasks: "./roles/Pod_1/host1/tasks/hostname_config.yaml" # ansible-playbook playbooks/playbook.yaml -v Using ~/ansible-playbook/ansible.cfg as config file PLAY [test netconf] *************************************************************************************************************************************************************** TASK [Gathering Facts] ************************************************************************************************************************************************************ ok: [host1] TASK [Set hostname server in the device] ******************************************************************************************************************************************

The hostname is properly set to host1:

# ssh admin@192.168.235.13 Welcome to Virtual Border Router - 2.0.2 host1> show state system hostname host1

This is a very simple example with static configuration but you can take advantage of Ansible flexibility to automate the configuration of 6WIND vRouters in your network topologies.

Are you ready to automate your bare metal vRouter deployment? Contact us today to discuss which scenario best suits your requirements.

Aurélien Degeorges, Support Engineer for 6WIND, also contributed to this blog.