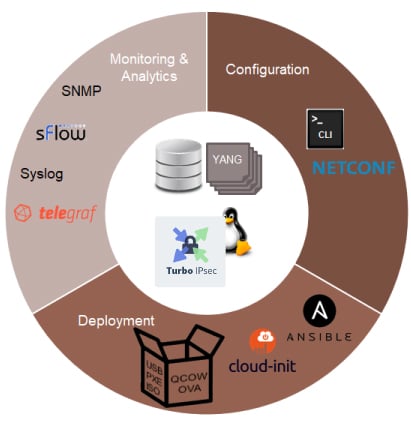

Automation and orchestration are key in modern networking deployments as we showed in a previous blog. Centralized management frameworks provide deployment, license management, monitoring and analytics, configuration and lifecycle management of network services. These network services are provided by Virtual Border Routers, or vRouters, that must therefore implement the right APIs in order to be properly managed.

At 6WIND, we are making our vRouters ready for integration with such next-gen management frameworks.

Deployment: We provide packaged images for bare metal, KVM, VMware, AWS and leverage the Linux cloud-init and Ansible features to enable customization of the system.

Monitoring: We support the traditional SNMP and syslog mechanisms, plus data plane telemetry through sFlow, and graphical analytics with time series data base.

In this blog, I will focus on the configuration capabilities of the vRouter, describing the concepts of the CLI and NETCONF/YANG-based management engine and giving the example of a simple network configuration through the CLI.

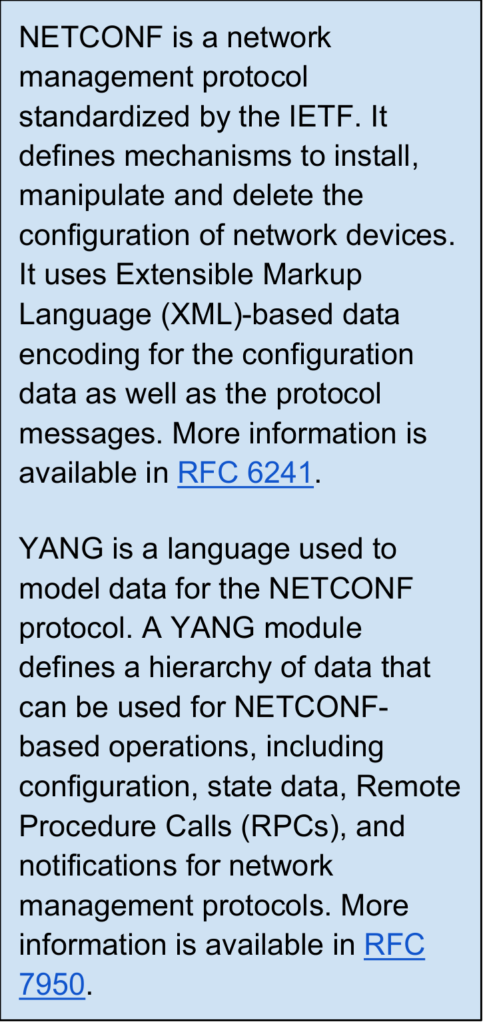

The CLI is actually a NETCONF client that communicates with the vRouter’s YANG-based configuration engine. Its command names and statements follow the syntax and the hierarchical organization of the vRouter YANG models. Data consistency is checked against the YANG model, so that syntax errors are detected early. The configuration engine supports transactions and rollback on error.

The NETCONF API can be used as well from any NETCONF client to configure and monitor the router remotely, therefore enabling automation and orchestration. Let’s look in more details at the concepts behind the CLI and the new management engine.

Industry-standard CLI with a Linux shell taste

The CLI comes with traditional features, such as completion, history and contextual help. Users can walk the configuration tree as they would browse a file system, for example, / jumps to the root of the configuration, .. moves one level up. Relative and absolute paths can be used to refer to configuration data, making browsing very efficient.

NETCONF/YANG-based engine

The management engine comprises a YANG-based datastore and a NETCONF server. It supports all the required protocol operations to read and write the configuration: <get>, <get-config>, <edit-config>, <copy-config> and so on. As explained, the CLI is actually a client that connects locally to this NETCONF server.

Clear separation between configuration and state data

The management engine stores separate configuration and state data for each feature. The state part includes additional runtime information compared to the configuration part; typically statistics. It is possible to display the state data from anywhere in the CLI using the get command, so that the user can review data from the current state while building his configuration.

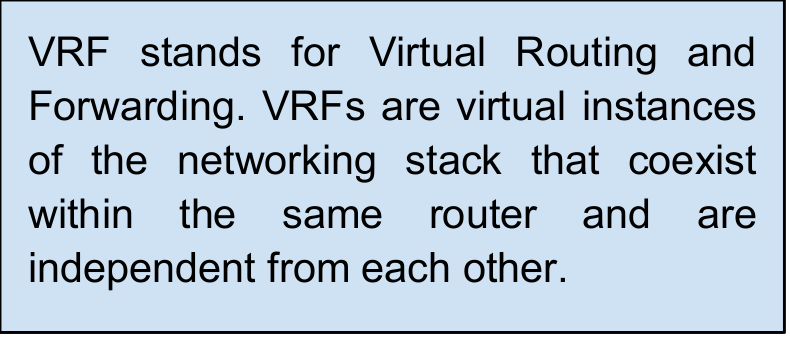

VRFs

Other VRFs must be created to configure the networking plane, including data plane interfaces and network protocols such as BGP, filtering, IKE.The management VRF is created by default. It is used to configure remote access to the vRouter: management interface and services such as SSH, DHCP, NTP.

This approach ensures a good isolation of services and will allow in the future to define limits in terms of CPU resource or memory for a given VRF. It relies on Linux network namespaces (netns).

Compatibility with existing Linux Day-1 configuration

Cloud-init is embedded in the vRouter for Day-1 configuration, that is, the initial configuration of the vRouter to enable basic console access. It can be used to configure the management interface, basic networking services such as DHCP and SSH, provision the SSH keys, etc.

The management engine is compatible with such cloud-init configuration, as it does not touch the configuration of network services (SSH, DNS, DHCP, etc.) as long as there is no configuration statement for them. When a configuration statement is present, it takes precedence over any existing, external configuration. Finally, a known service like SSH will be recognized and will not be restarted if it is not necessary.

To illustrate the concepts described above, here is a very simple example of configuring DHCP and enabling SSH on a management interface.

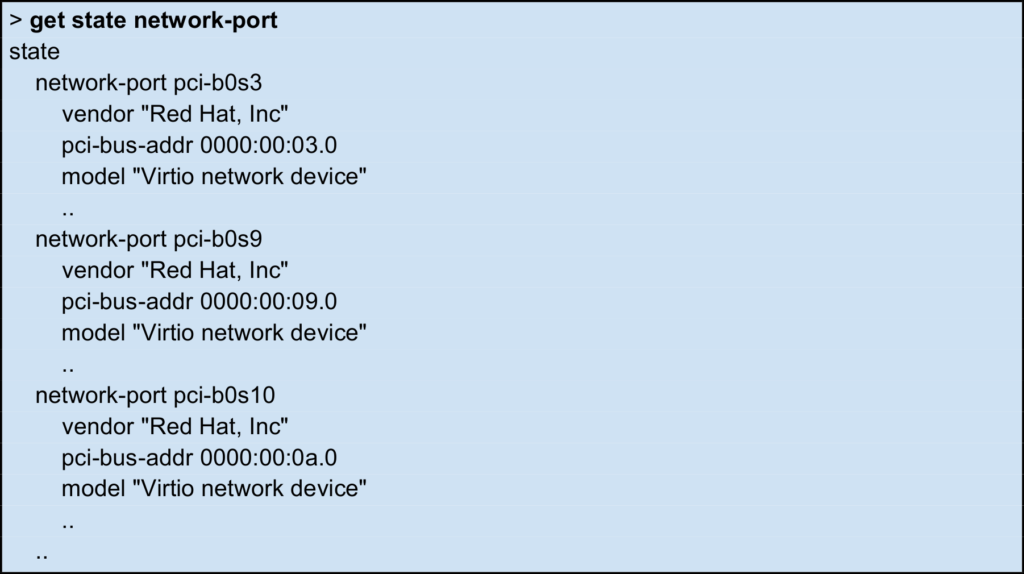

List the available network ports:

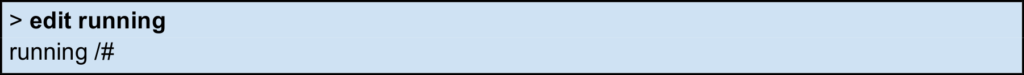

Start to edit the current configuration:

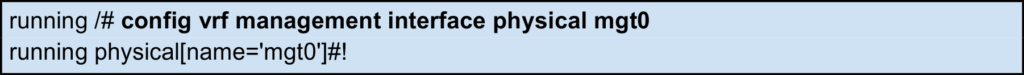

Create a management interface named mgt0 and associate it to the pci-b0s3 network port:

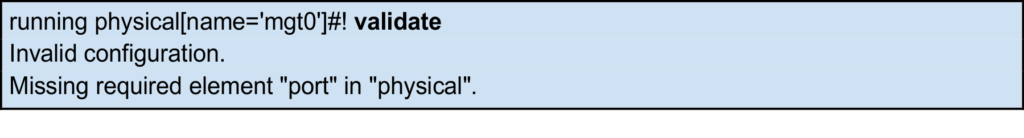

The exclamation mark at this step indicates that the configuration is incorrect. The validate command can be used to get more information:

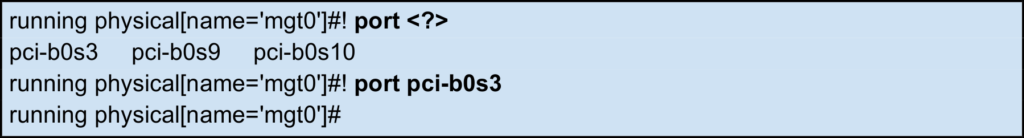

A port must be associated to the interface. The available ports can be listed using ?:

The configuration is now correct, the exclamation mark has disappeared.

Enable DHCP on mgt0:

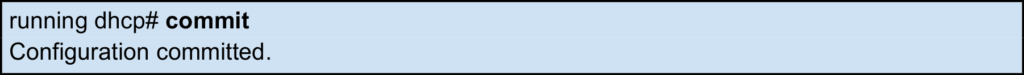

Commit the configuration:

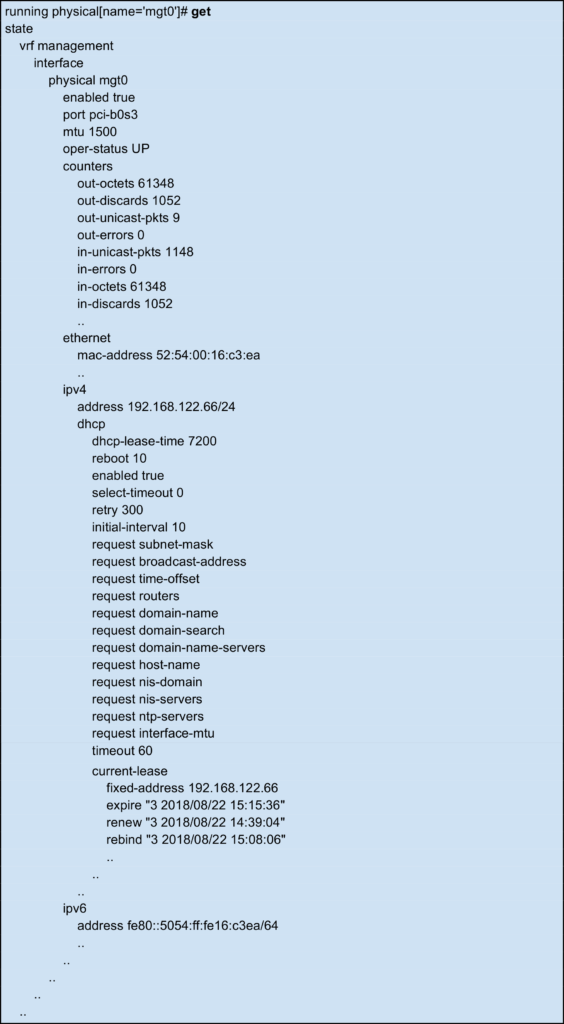

Retrieve the current system state to check that the configuration is correct. We can see the information we just configured, but also additional state information coming from the system, like MAC address, interface operational status, IP address obtained through DHCP, statistics counters, etc.

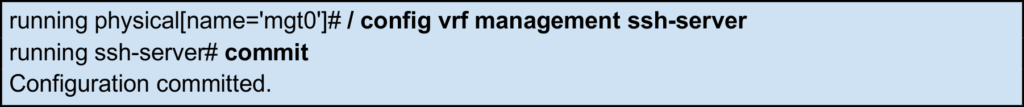

Now the vRouter can be accessed via a remote SSH client using the address acquired by DHCP.

We can make this configuration the startup one with:

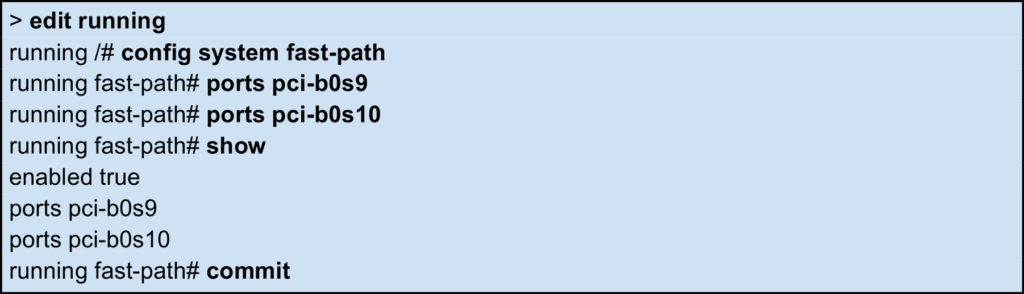

Now that remote access is properly ensured in the start configuration, it is time to configure the fast path. The fast path is the Virtual Border Router component in charge of packet processing. To accelerate ethernet NICs, they must be dedicated to the fast path as follows:

Now that some ports have been dedicated to the fast path, we can start the networking configuration.

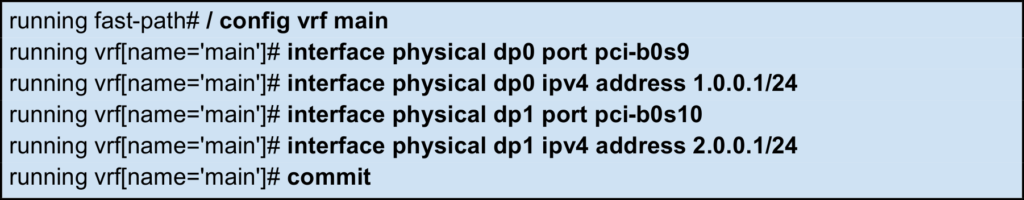

Let’s create the dp0 and dp1 interfaces in a single VRF called main and associate the two previous ports to them. The 1.0.0.1/24 address will be added to dp0, and 2.0.0.1/24 address will be added to dp1.

I hope this gives you a good feeling of what the new vRouter management looks like. Feel free to provide your feedback or contact us if you want to evaluate the vRouter.

Stay tuned for our next software management blog, where we will explore in details the configuration of routing functionalities and introduce our FRR integration, and make sure you subscribe to our upcoming webinars that will focus on management!

Yann Rapaport is Vice President Of Product Management for 6WIND.